Structure from Motion

This project implements a complete incremental Structure-from-Motion (SfM) pipeline that simultaneously reconstructs the 3D structure of a scene and estimates the camera pose for each image, using only a set of 2D photographs as input. It was developed as part of the RBE549 Computer Vision course at Worcester Polytechnic Institute.

Pipeline

1. Feature Matching — SIFT features are extracted and matched across image pairs. Matches are filtered first by homography constraints, then by RANSAC to remove outliers.

2. Fundamental Matrix Estimation — The fundamental matrix F is computed using the 8-point algorithm with Hartley normalization and RANSAC-based outlier rejection.

3. Essential Matrix & Camera Pose — The essential matrix E is recovered from F and the known camera intrinsics. Four candidate poses are extracted via SVD, and the correct one is selected using cheirality checks (all reconstructed points must be in front of both cameras).

4. Linear Triangulation — 3D world coordinates for matched feature pairs are recovered using the Direct Linear Transform (DLT), establishing the initial point cloud.

5. Non-Linear Triangulation — Triangulated points are refined by minimizing reprojection error using Levenberg-Marquardt optimization.

6. Perspective-n-Point (PnP) — New cameras are registered using Linear PnP (DLT-based) followed by Non-Linear PnP refinement. RANSAC is applied to handle outlier correspondences.

7. Bundle Adjustment — All camera poses and 3D points are jointly refined using sparse bundle adjustment to minimize total reprojection error across all views.

3D Reconstruction Results

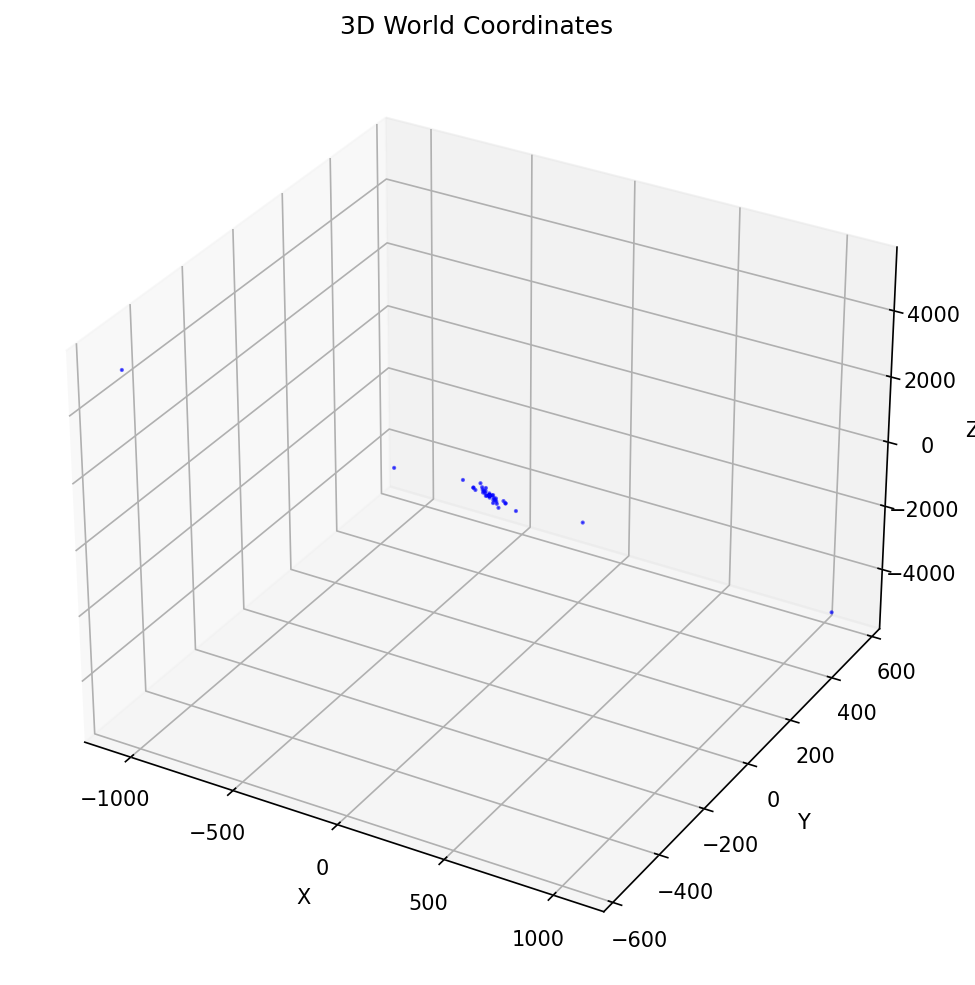

Initial Triangulation

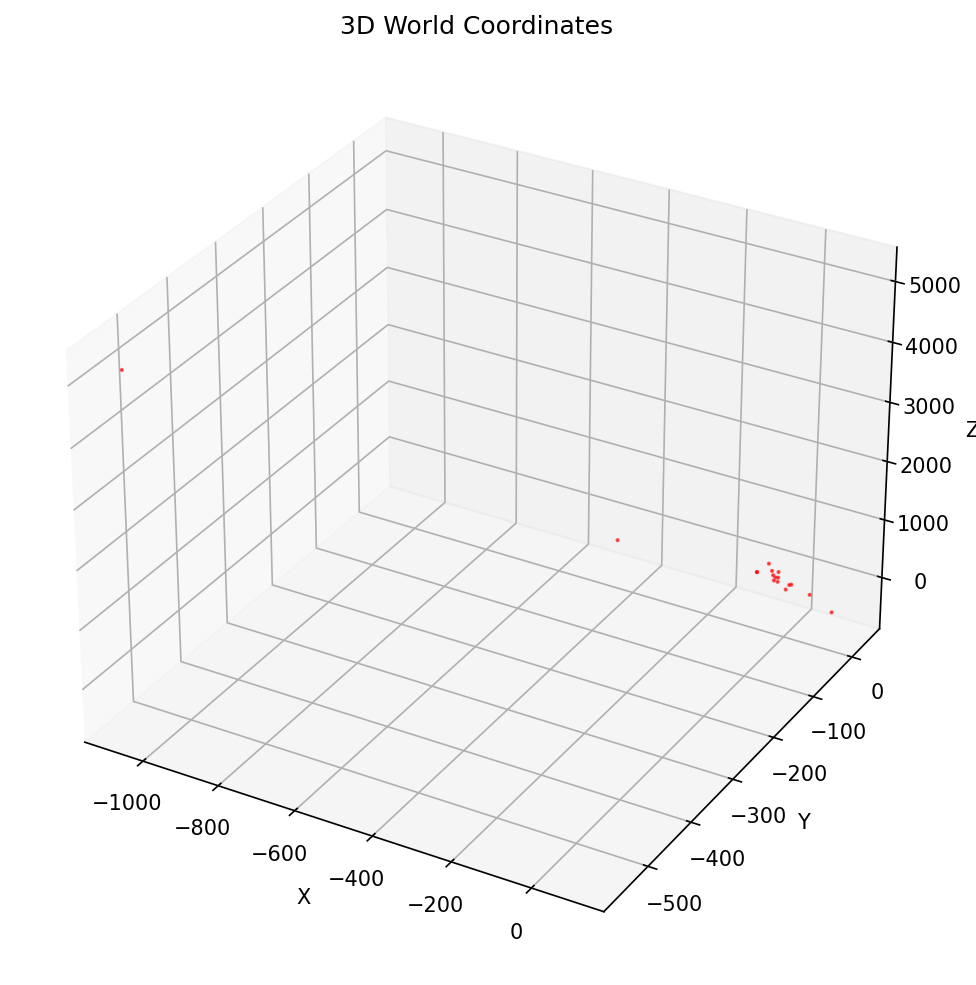

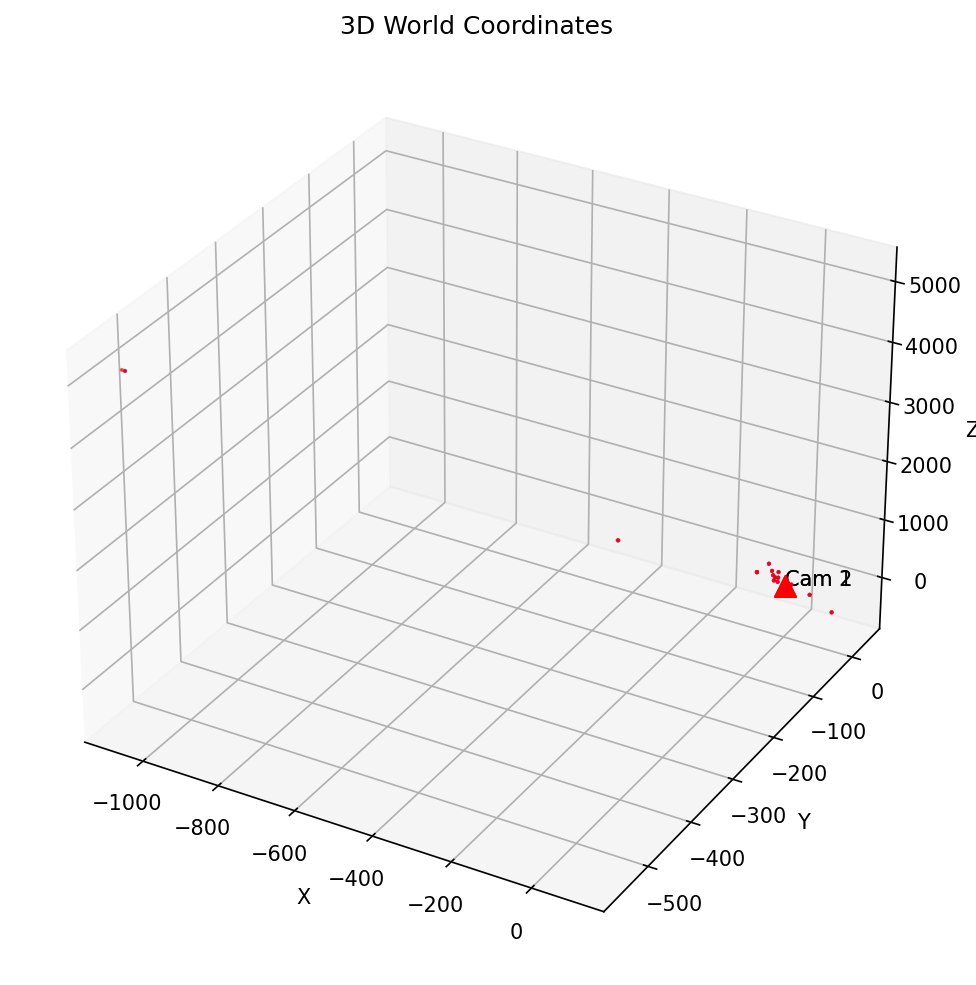

After Cheirality Check

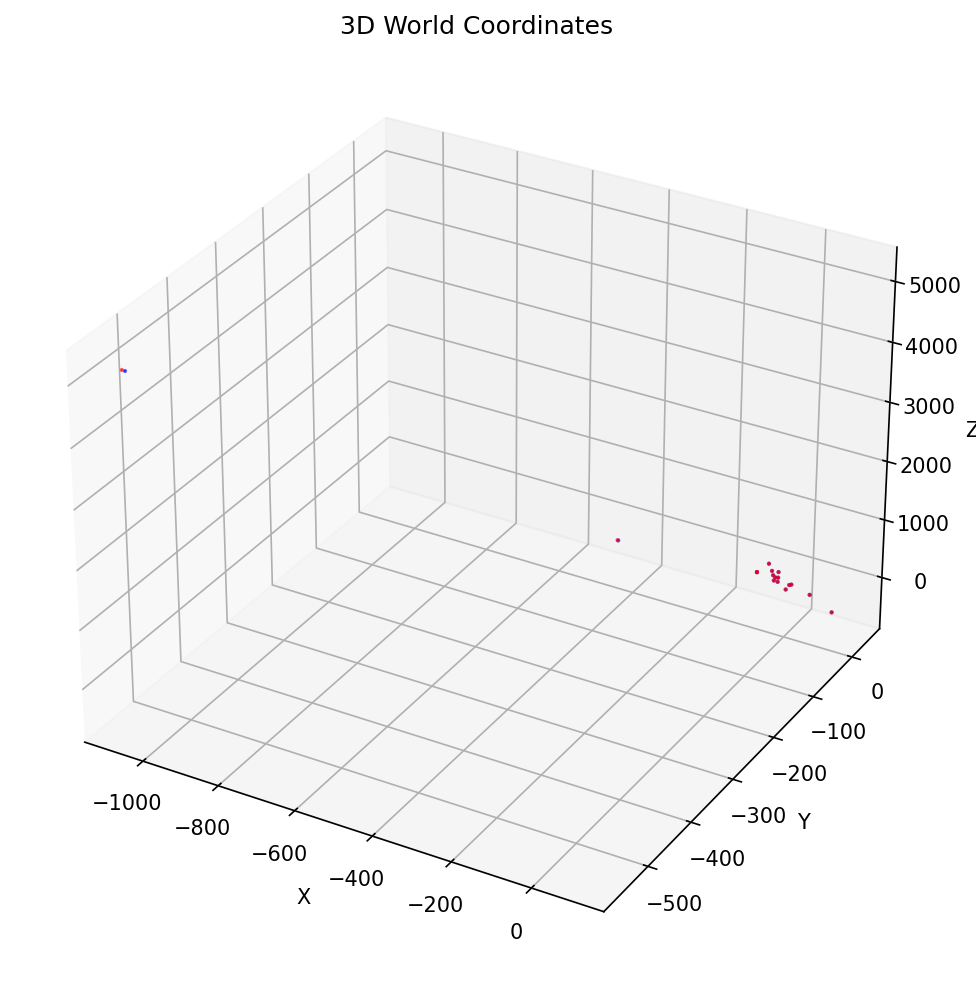

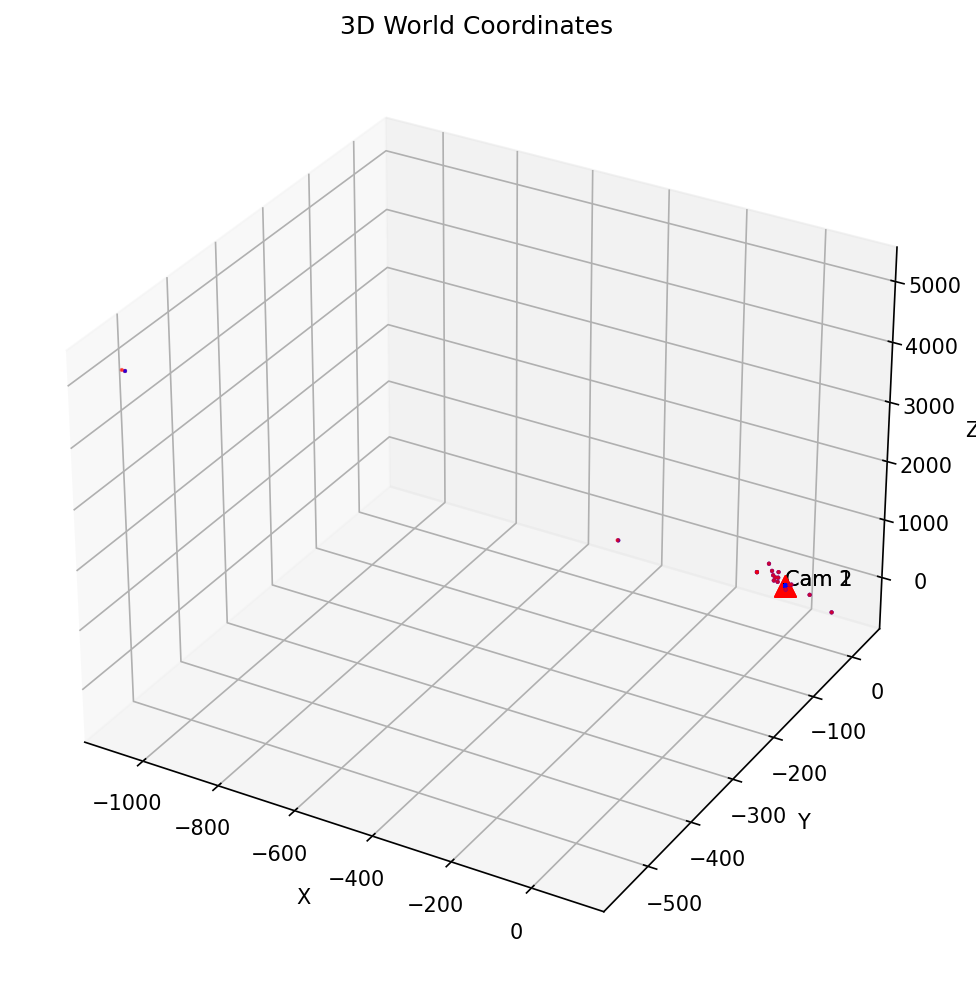

After Non-Linear Refinement

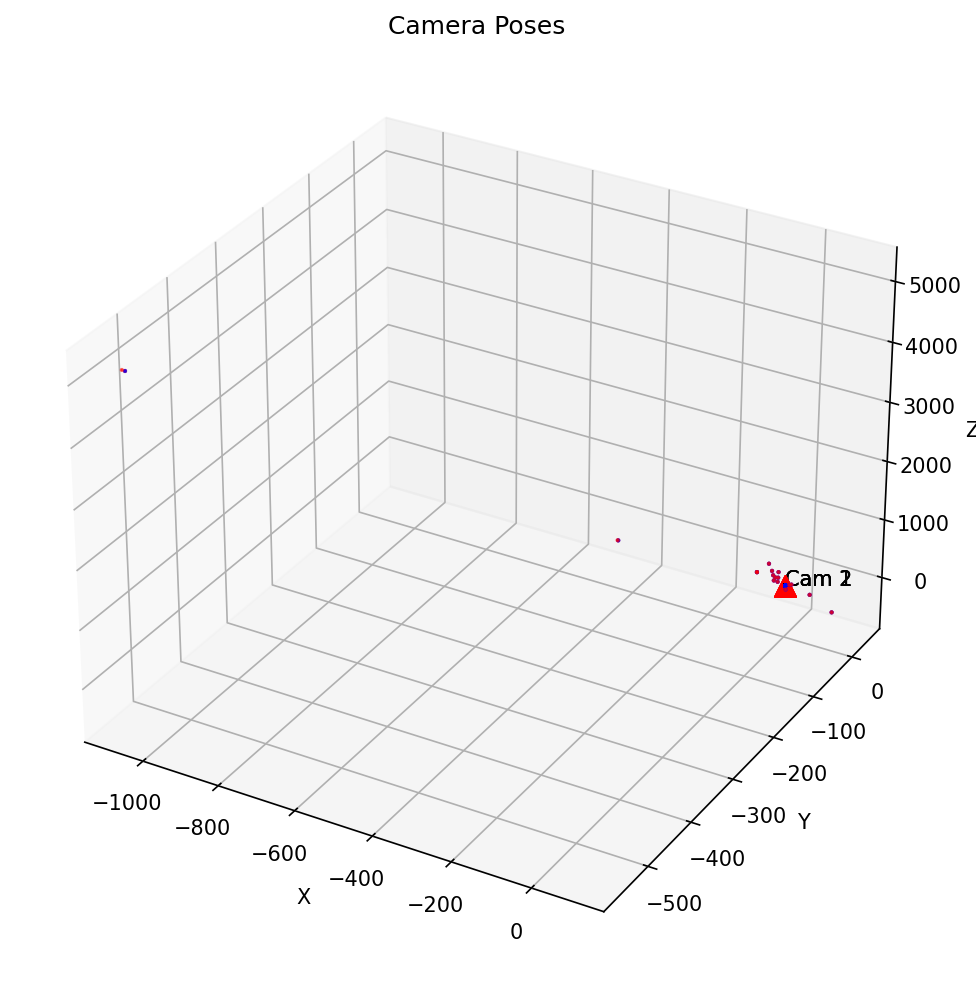

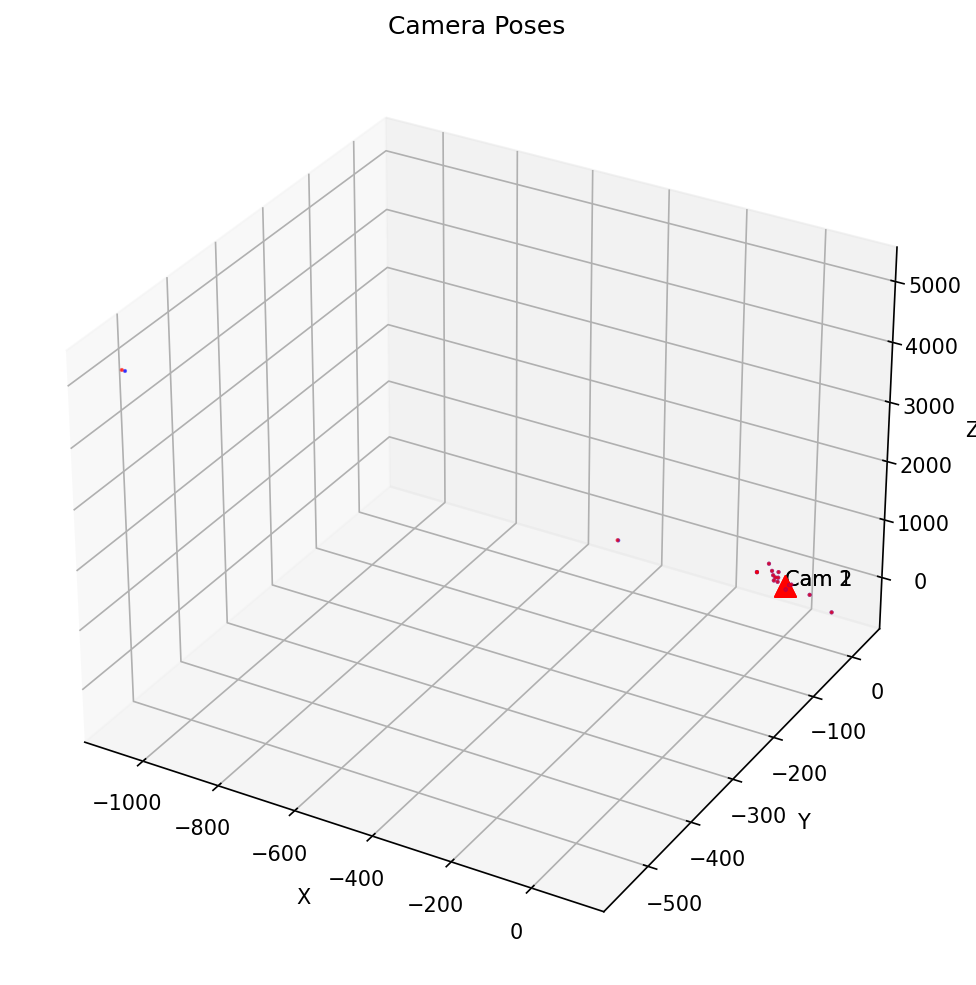

With Camera Pose (Image Pair 1-2)

Bundle Adjustment

Before Bundle Adjustment

After Bundle Adjustment

Final Point Cloud with Camera Poses