Extended Kalman Filter

This project implements an Extended Kalman Filter (EKF) for robot state estimation by fusing data from an Inertial Measurement Unit (IMU) with visual pose estimates from AprilTag landmarks, developed as part of the RBE595 Robot Navigation course at Worcester Polytechnic Institute. Results are validated against VICON motion capture ground truth.

Why Extended?

Unlike the standard Kalman Filter, the EKF handles nonlinear system dynamics. IMU-based motion propagation and camera projection are both nonlinear operations, requiring Jacobian linearization at each timestep. Jacobians are computed analytically using SymPy for symbolic differentiation.

System Architecture

State Vector — 15 states: 3D position, 3D velocity, orientation (quaternion), and IMU biases (gyroscope + accelerometer).

Prediction Step (IMU) — At each IMU sample, the state is propagated forward by integrating accelerometer and gyroscope measurements. The state covariance is updated using the linearized Jacobian of the process model.

Update Step (Vision) — When an AprilTag observation is available, a Perspective-n-Point (PnP) algorithm estimates the camera pose. The residual between predicted and observed pose is used to correct the state estimate via the Kalman gain.

Sensor Fusion — The IMU provides high-frequency motion predictions between observations, while AprilTags supply low-frequency but accurate pose corrections, complementing each other’s weaknesses.

Results

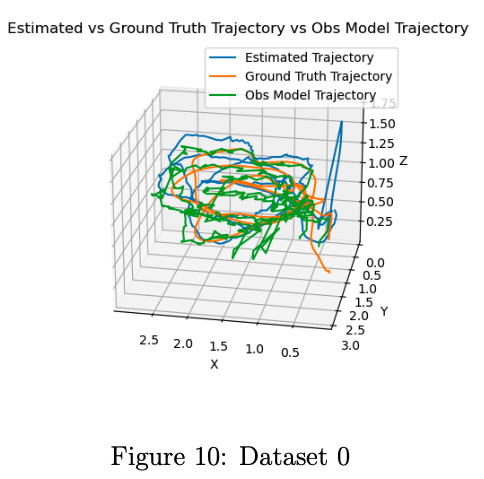

EKF vs Ground Truth vs Observation Model (3D Trajectory)

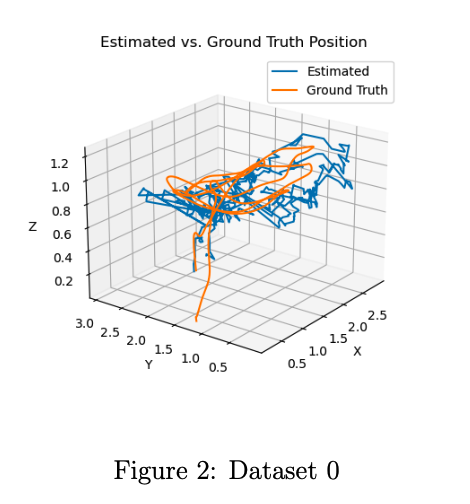

Position Estimation Accuracy

Orientation Tracking (Roll / Pitch / Yaw)

The EKF trajectory closely follows the VICON ground truth, outperforming the raw observation model by smoothing sensor noise and handling measurement dropouts.