Goal-Driven Navigation via Deep Reinforcement Learning

This project trains a mobile robot to autonomously navigate toward randomly placed goals while avoiding obstacles, using the TD3 (Twin Delayed Deep Deterministic Policy Gradient) algorithm. The system runs in ROS Noetic + Gazebo 11, with a Velodyne 3D LiDAR and RGB camera as sensor inputs, outputting continuous linear and angular velocity commands.

Training Demo

Simulation Environment & Sensors

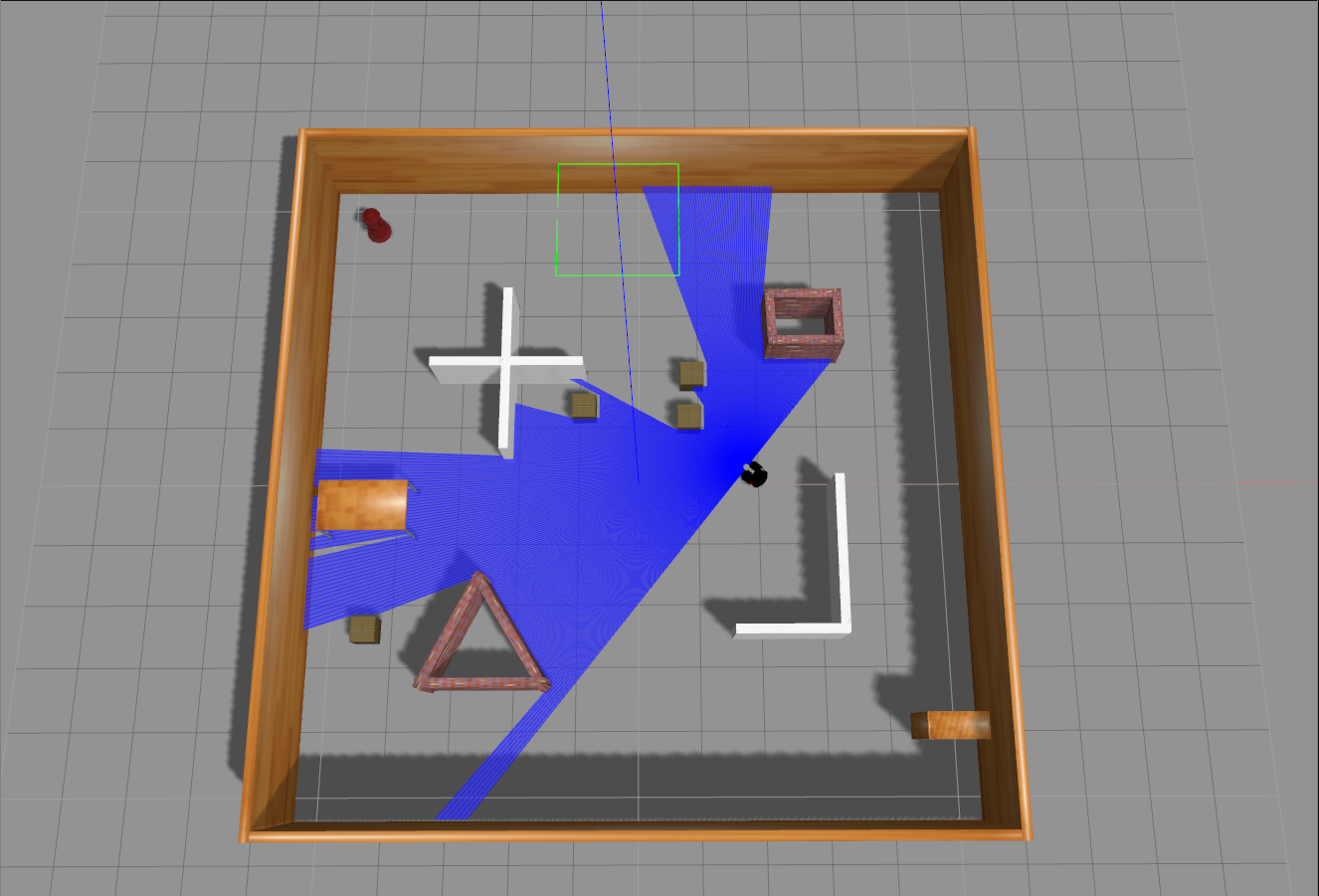

Gazebo Environment

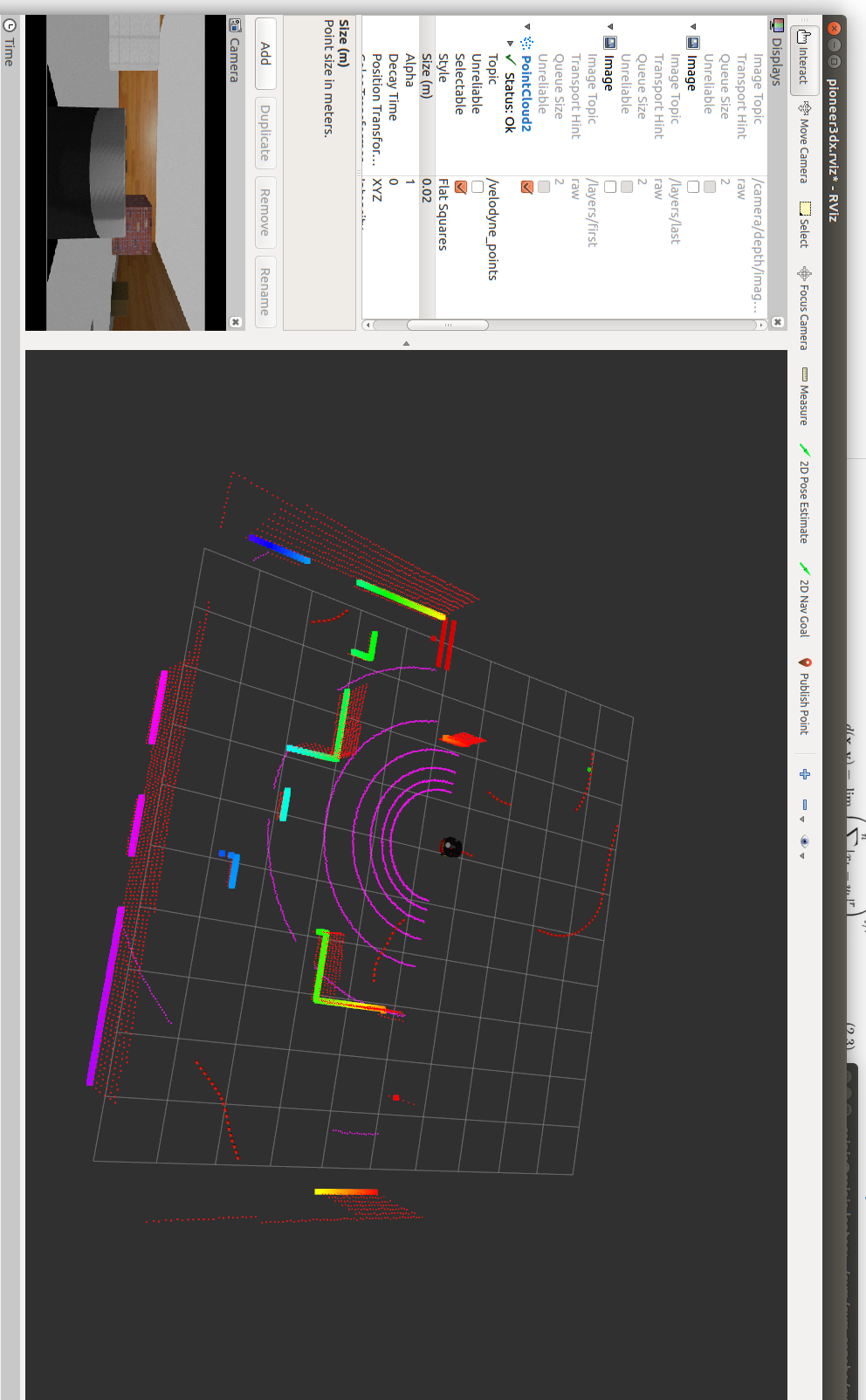

Velodyne LiDAR (RViz)

Algorithm: TD3

Twin Delayed Deep Deterministic Policy Gradient (TD3) addresses the overestimation bias of standard actor-critic methods through three key modifications:

1. Clipped Double Q-Learning — Two independent critic networks estimate the Q-value; the minimum is used for the TD target, reducing overestimation.

2. Delayed Policy Updates — The actor and target networks update less frequently than the critics (every 2 critic updates), allowing the value estimates to stabilize before the policy changes.

3. Target Policy Smoothing — Noise is added to the target action during critic updates, smoothing the value function and reducing exploitation of sharp Q-function peaks.

System Architecture

| Component | Details |

|---|---|

| Simulation | Gazebo 11, ROS Noetic |

| Sensors | Velodyne 3D LiDAR, RGB camera |

| Action space | Continuous linear + angular velocity |

| State space | LiDAR scan + goal direction + distance |

| RL Algorithm | TD3 (actor-critic) |

| Deep learning | PyTorch 1.10+ |

| Training monitor | TensorBoard |

The actor network outputs deterministic velocity commands given the current sensor state. The dual critic networks estimate the expected cumulative reward, providing a stable learning signal via the Bellman equation.