AutoPano: Image Stitching

AutoPano is an automated panoramic image stitching system developed as part of a geometric computer vision course at Worcester Polytechnic Institute. The system merges multiple overlapping photographs into seamless wide-angle composites through two implementations: a classical computer vision pipeline (Phase 1) and a deep learning-based approach (Phase 2).

Phase 1: Classical Pipeline

The classical pipeline processes image pairs through six sequential stages, from raw input to stitched panorama.

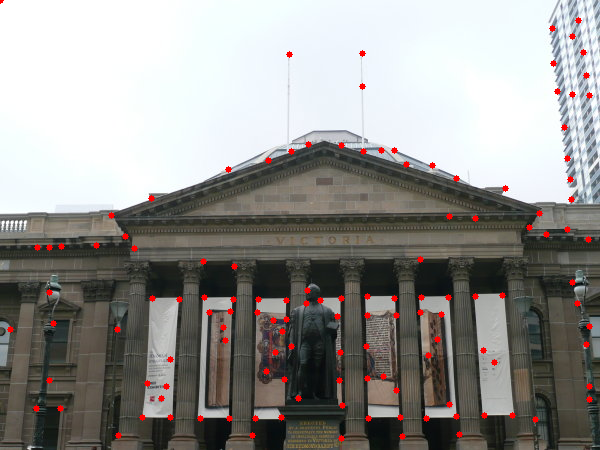

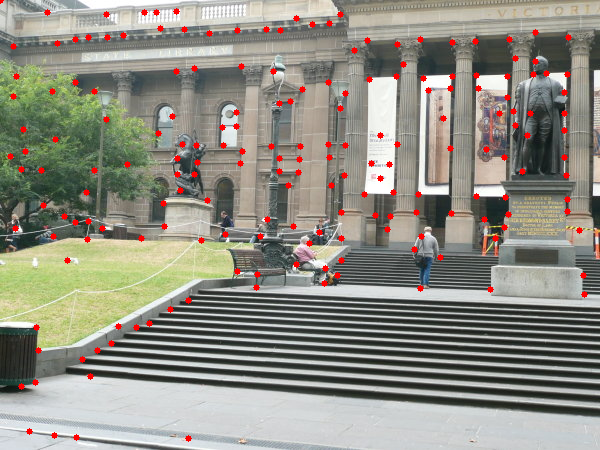

Step 1: Corner Detection

Salient keypoints are identified using the Harris and Shi-Tomasi corner detection algorithms, locating geometrically distinctive regions across each image.

Step 2: Adaptive Non-Maximal Suppression (ANMS)

Raw corner responses are filtered using ANMS to retain only the most distinctive corners while enforcing even spatial distribution — preventing feature clustering near high-gradient regions.

Step 3: Feature Descriptor Extraction

An 8×8 patch-based descriptor is extracted around each retained corner. Patches are Gaussian-smoothed and normalized to produce robust, comparison-ready feature vectors.

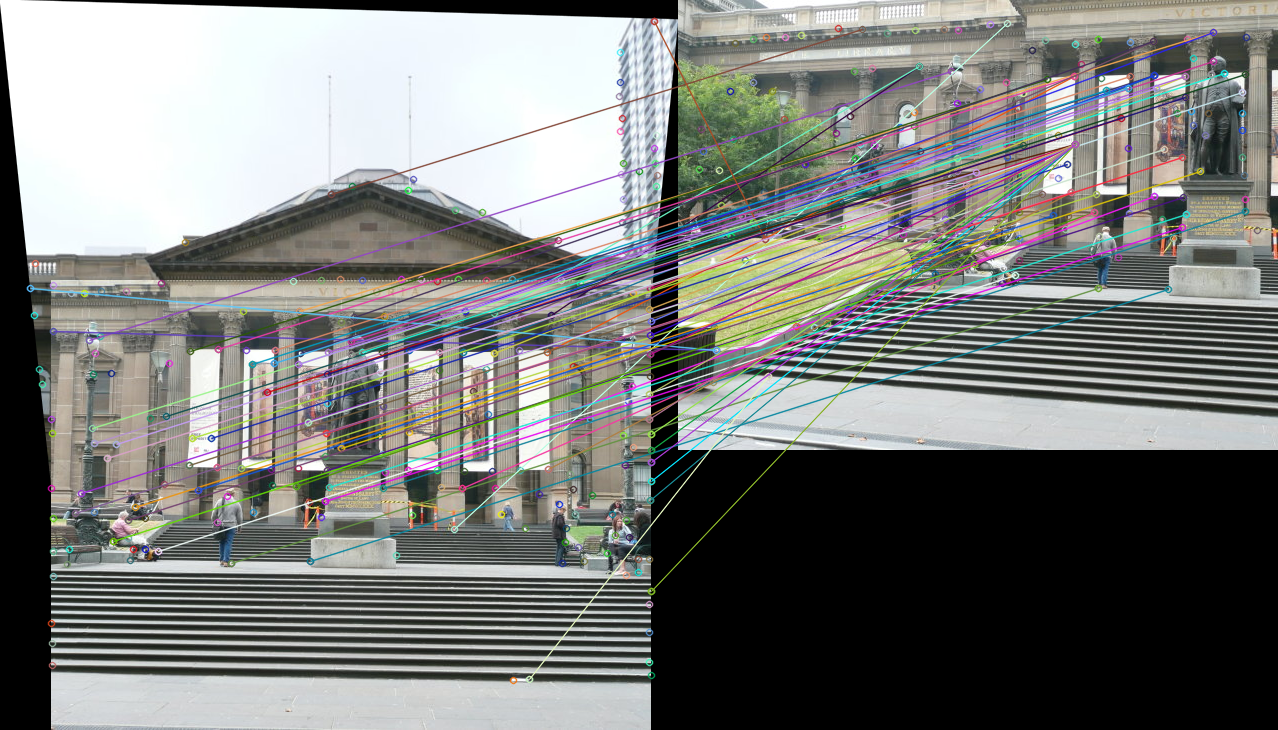

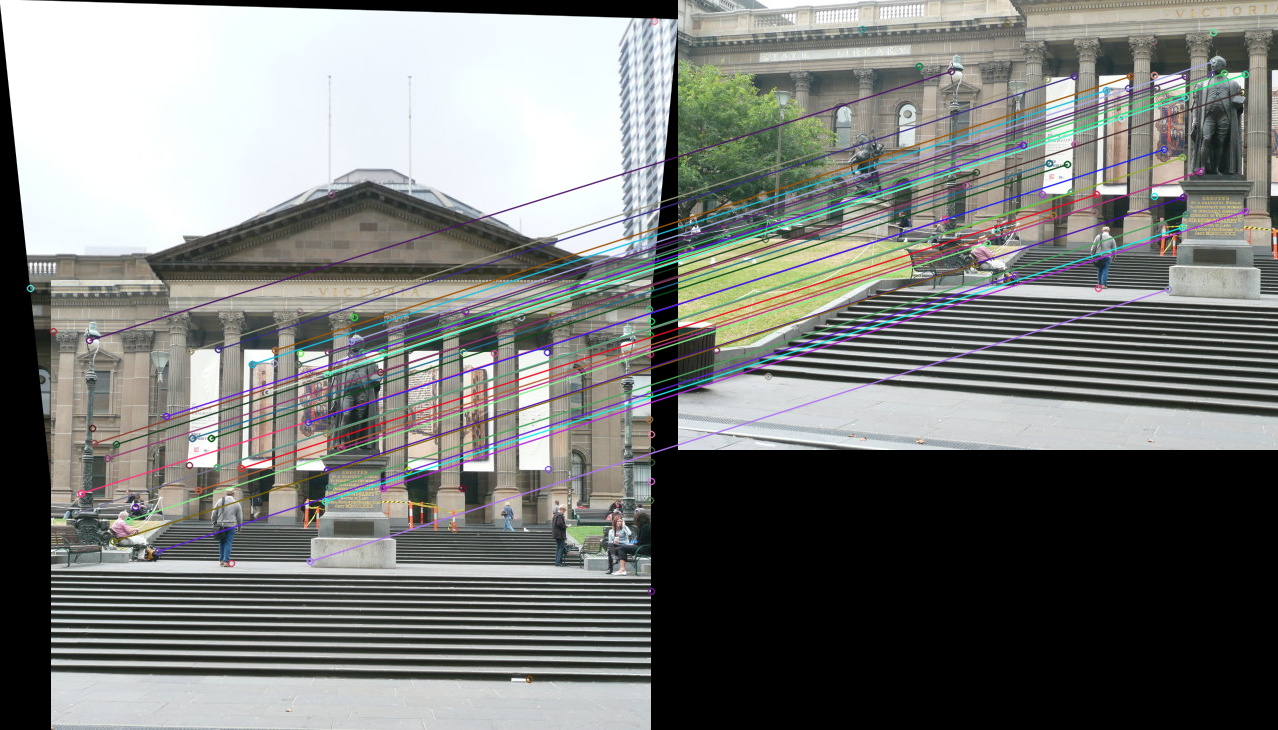

Step 4: Feature Matching

Correspondences between image pairs are established via ratio-test validation, filtering ambiguous matches by comparing distances to the best and second-best candidate descriptors.

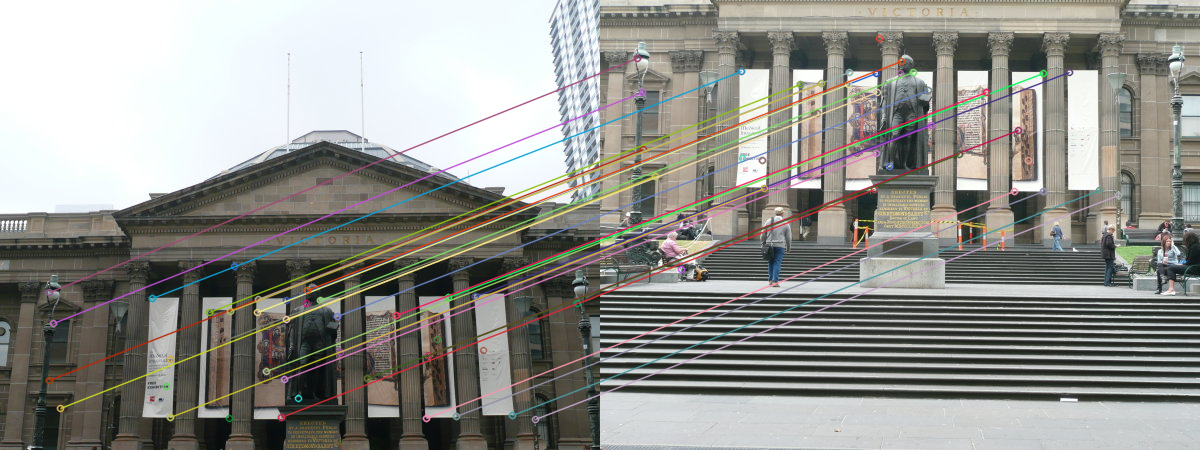

Step 5: RANSAC Homography Estimation

A robust homography matrix is computed using RANSAC (Random Sample Consensus), which iteratively identifies the largest set of inlier matches while rejecting outliers caused by noise or mismatches.

Step 6: Image Warping & Final Panorama

The estimated homography warps images into a common coordinate frame. Blending resolves seams at overlap boundaries to produce the final seamless panorama.

Phase 2: Deep Learning Approach

Phase 2 replaces the hand-crafted feature pipeline with a neural network trained to directly predict the homography between image pairs. Rather than detecting and matching keypoints explicitly, the network learns a mapping from image patches to the 4-point parametrization of the geometric transformation, enabling end-to-end differentiable training.